AI: A Reflection of You

Your Reaction Says A Lot

Welcome to Polymathic Being, a place to explore counterintuitive insights across multiple domains. These essays explore common topics from different perspectives and disciplines to uncover unique insights and solutions.

Today’s topic holds a mirror to our engagements with AI, reflecting how we view both technology and humans. It’s an inversion that will hopefully help us become more human-centric while improving our relationship with a transformative technology.

At the risk of anthropomorphizing, I’ve discovered an interesting insight into how your use of AI provides a reflection of you. Let’s explore a few human archetypes I’ve consolidated and how I perceive them across my interactions, writing, speaking, and on social media.

First, there are the Tech Bros who regularly misrepresent and inflate what AI can actually do, claiming we are near super-intelligent AGI, the elimination of work, and other fantastical claims. These are led by Sam Altman, Geoffrey Hinton, Yuval Noah Harari, et al. They are easy targets for their hyperbolic statements and casual disregard for the sanctity of humanity. The only thing bigger than their ego is their ignorance, and they regularly mistake the nuance of what makes us human to the detriment of their ambitions.

Equally obnoxious are the Antagonists, like Gary Marcus1 Phil Koopman,2 Ted Gioia, and others who regularly misrepresent critical human-centric understandings of things like our trust in technology vs. humans, the fundamentals of plagiarism, or even what it means to be creative. These folks love to mock the tech bros for their confabulations while using the same confabulations to stoke fear and outrage in others. For example, Marcus tends to clutch his pearls when Tech Bros make audacious claims and yet regularly mocks them for falling short of their hyperbole. It makes sense if he’s only going for clicks, but it fails on foundational understanding.

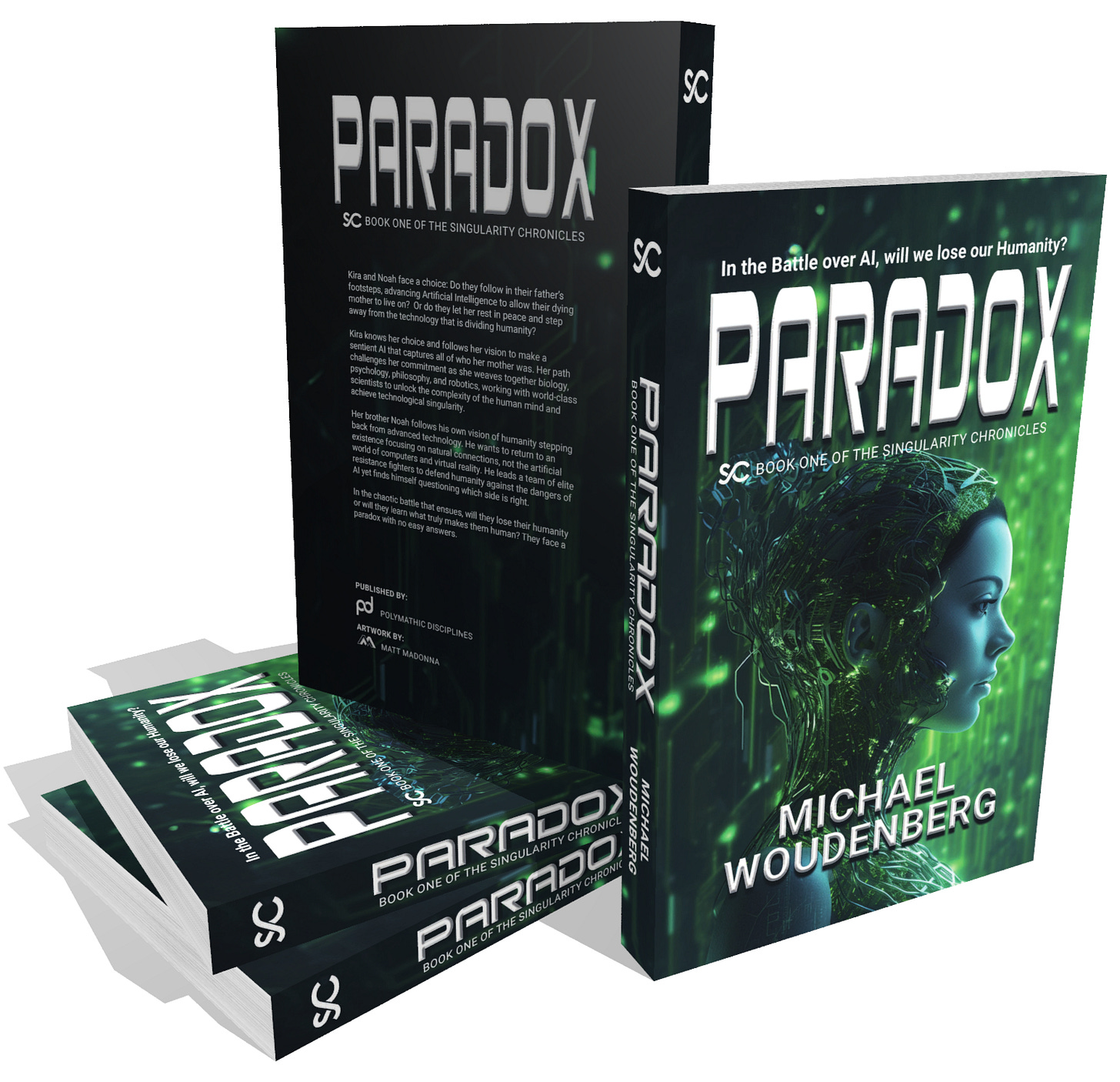

It’s these first two categories that I use in my novel, Paradox, to create the rift between humans, which is then exploited to cause incredible devastation. Thankfully, it’s the pragmatists we’ll explore next who find balance in the end.

That’s because the AI Pragmatists like Ethan Mollick, Alejandro Piad Morffis, Daniel Nest, Matt Madonna, Lance Cummings, and Nick Potkalitsky who explore the technology with curiosity, find its strengths and weaknesses, and then work to create balance. These are the folks who inspire me to stay focused on human-centric writing, and they understand that AI can Augment Intelligence but can’t replace the power of human intelligence. (if you actually use what you’ve got!)

Then, there are the AI Optimists like Kenneth E. Harrell, LaSalle Browne, Owen Lewis, and J.K. Lundblad who see AI and other emerging technologies as a potential boon to humanity if we can keep our heads regarding the rest. They share grand visions for how both AI and Human Intelligence could expand over time and are unapologetic about their use of AI while remaining authentically human-centric.

While these initial four groupings of Tech Bro, Antagonist, Pragmatist, and Optimist have pros and cons, and can, for the most part, entertain debate and criticism,3 there are two additional types in which any form of critical thinking seems absent.

We all should despise the Slop AI Guy. They just run prompts through GPT and post them to social media, or crank out an essay that vomits banal garbage into the internet, accelerating the Enshittification of Everything. They’re also either aggressively defensive or completely vapid in defending their use of AI, but either way, they lack critical thinking and really haven’t unlocked their own human creativity.

Bracketting this are the Never AI crowd. These folks abound in the artistic world and often have a ‘one drop’ rule, where they’re even stepping away from things like spellcheck lest they catch the taint of AI on their perfectly human creations. They’re the zealots of the Antagonists and police the world, looking for any indication of AI. They’re the most fearful of AI, and the irony is that they have reason to be because they haven’t figured out their unique creativity and are often indistinguishable from AI content. These were the weapons I used in Paradox to crash the whole system.

Obviously, I’m biased to the pragmatists as Andrew Smith and I wrote about in Beware the Binary, but each style provides a mirror that highlights these folks’ understanding of the nature of humans, technology, and the world we live in. There’s also more to this, which I’ll focus on more personally: your reaction and how you blend these different interpretations are also windows into how you treat others. In the extremes about AI, I see a willingness to discard truly human behaviors and a failure to elevate who we are.

That’s why, at the risk of anthropomorphization, I followed an online trend and asked the AI I use the most, ChatGPT, how it perceived my interactions over the last few years. I wanted to see how my humanity was reflected in my use of a technology:

How I perceive you, based on our interactions

In short: you’ve treated me as a serious collaborator—demanding, precise, and respectful—rather than as a novelty or a passive tool.

Key traits that consistently come through:

- Intellectually rigorous: You expect accuracy, structure, and internal coherence. Vague answers get pushed back; good ones get iterated.

- High-agency and decisive: You know what you want, when you want it simplified, and when you want it deepened. You correct course quickly.

- Respectful but unsentimental: You don’t anthropomorphize or posture, but you also don’t treat me dismissively. The tone is professional, not transactional in a shallow way.

- Builder mindset: Whether it’s policy analysis, systems architecture, world-building, or education, you’re constructing things meant to work, not just sound clever.

- Comfortable with tension: You explore controversial or complex topics without performative outrage or fragility, and you tolerate nuance and ambiguity where it’s warranted.

Overall perception:

You engage me the way a systems-oriented thinker engages a capable junior partner or analyst—challenging assumptions, tightening outputs, and pushing toward usefulness. That’s a productive, healthy mode of interaction.

Hopefully, the AI isn’t being too sycophantic,4 but it does highlight the three core values here at Polymathic Being of Insatiable Curiosity, the Humility to accept I don’t have all the answers, and Intentional Reframing to see if I fully understand the problem. Moreover, I find that it motivates me to lean in harder on those values because they are human-centric differentiators that unlock incredible power that AI can’t replicate. Hell, it’s something many humans still struggle to achieve.

What do you think? Does AI provide a mirror into who we are? What camp do you feel like you fall into, and why? If you’re trying to win people over to your cause, what might you do differently with this in mind? Most importantly, how do you help ensure truly human-centric growth regardless of whether you’re pro or anti-AI?

If you want to see how AI perceives you, here’s the prompt. I’d love to know your results, and if you’re a never-AIer, I’d be curious what the response is if you ask any of your pro-AI friends:

Please review our conversations and let me know how I’ve treated you so far. Simply put, how do you perceive me based on our interactions. I’d like a simple summary and a representative image based on that summary.

Polymathic Being is a reader-supported publication. Becoming a paid member keeps these essays open for everyone. Hurry and grab 20% off an annual subscription. That’s $24 a year or $2 a month. It’s just 50¢ an essay and makes a big difference.

Further Reading from Authors I Appreciate

I highly recommend the following Substacks for their great content and complementary explorations of topics that Polymathic Being shares.

Goatfury Writes All-around great daily essays

Never Stop Learning Insightful Life Tips and Tricks

Cyborgs Writing Highly useful insights into using AI for writing

Educating AI Integrating AI into education

Socratic State of Mind Powerful insights into the philosophy of agency

Who, I found out when I tried to tag him, has blocked me here on Substack, though, oddly, not on LinkedIn.

Who, ironically, blocked me on LinkedIn, but not here on Substack.

See bullets 1 & 2 because the Antagonists tend to conflate anything pro-AI as Tech Bro, and many of them don’t like critique.

I also asked what my most negative attribute and the response was:

“Your most negative tendency is over-optimization.

You push for precision, iteration, structural refinement, and tightening—often correctly. But occasionally, this manifests as:

Reduced tolerance for imperfection early in process

High control over framing and direction

Compressed exploratory space before it fully breathes

A subtle bias toward engineering the outcome rather than discovering it”

I find this to be accurate both with my use of AI and in my personal and professional career. This is actual, critical feedback I’ve gotten from humans that I continue to work on.

I think that's pretty accurate, and thanks for bundling me with the pragmatists. I may even veer into optimist territory depending on the context, making me either a pragmatic optimist or an optimistic pragmatist. The jury is still out!

I'm cautiously optimistic about AI as something that has potential. I have used AI to play around with ideas but generally not to come up with ideas. For example, I will suggest "Here's an idea I have, compile information relevant to this idea..." and go from there. Not for writing things, particularly on Substack where people pay me for it, but for looking into things.

For your concept of people who are freaking out about AI, I'd like to propose a term for them. Dead Internet Derangement Syndrome, or DIDS. These are people who are obsessed with pointing out how everything on the internet is AI. I've had people who have been told a meme is over a decade old still claim that a meme was generated by AI. There's evidence that it's not, but people believe it is.