The Plagiarism of AI

A Technology Stands Accused

Welcome to Polymathic Being, a place to explore counterintuitive insights across multiple domains. These essays explore common topics from different perspectives and disciplines to uncover unique insights and solutions.

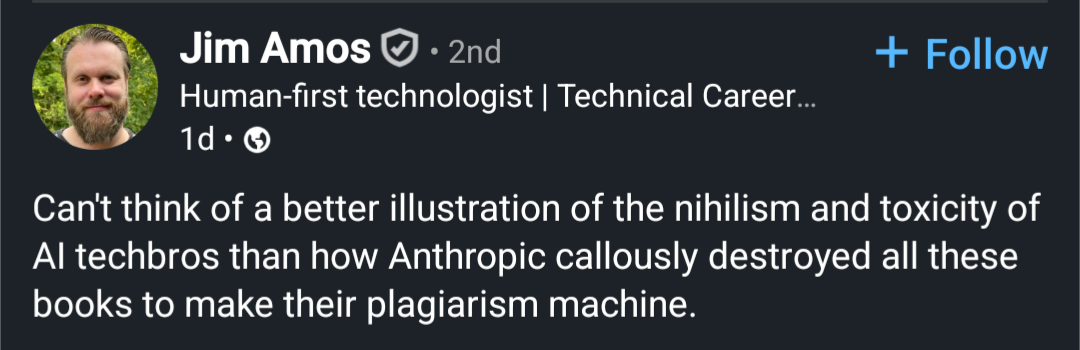

Today's topic investigates the accusation of plagiarism against Artificial Intelligence (AI), like the popular Large Language Models (LLMs) and image generators. It’s a serious critique that warrants a thorough investigation to gain a deeper understanding of AI and what it means to be human.

You don’t have to spend long in the creative spaces on social media before you find a post accusing AI of plagiarism. The problem is that, most of the time, it’s a misuse of what plagiarism means. Since it’s essential to get right, we’ll look at what plagiarism is, and isn’t, the legal carve-out for ‘Fair Use’ of copyrighted works, and then look at what artists call inspiration and how humans actually create through mimicry

Plagarism

Let’s start with the actual definition:

Plagarize: to steal and pass off (the ideas or words of another) as one's own : use (another's production) without crediting the source

The term has a fascinating history, originating from the Latin plagiarius, which means “kidnapper.” This evokes the idea of a forceful theft of a person or, perhaps, in this case, an idea vs. the theft of a physical object and claiming it as your own.

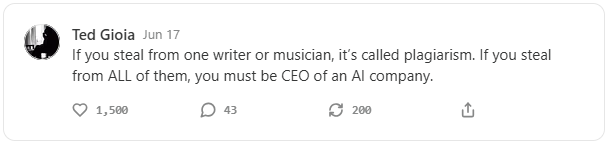

Ted Gioia recently wrote “Help!-AI Is Stealing My Readers,” where he laments:

There’s some heavy irony here. The AI chatbots have been trained on my books. So if a scammer uses AI to write a jazz book, it probably regurgitates things I’ve actually written myself in the past.

The problem with this accusation of AI plagiarizing is that it should be detectable. But that’s not what we find at all. I teach Master's students at the University of Arkansas, which provides tools to detect plagiarism and others to detect AI. I’ve used them on Substack content, and, thus far, no content identified as AI has been found to be plagiarized, and no content identified as plagiarized has been flagged as AI.

What Ted is complaining about is a series of jazz books that AI may have written, but with enough deviation in both names and content that it is not considered plagiarism. It’s a technique called copycatting and has been going on for millennia across all commercial sectors, from food brands and luxury goods to books and movies.1

Plagiarism is stealing and claiming someone’s work as your own, not mimicking a popular book. Further, Ted’s copycat book isn’t an AI problem; it’s a human one, and while his example is unscrupulous, it’s legal and common. Now, let’s address the use of books to train AI, which is defended under the concept of Fair Use.

🎧Prefer to listen? You can find these essays on all major podcast players!

Fair Use

U.S. copyright law has specific guidance on the repurposing of written or visual art known as Fair Use. To meet this criterion, you must consider:

Purpose & character of the use: Is the use transformative (different function, adds new expression or meaning)? Is it commercial or nonprofit? Is there a public-interest element?

Nature of the copyrighted work: How creative is the original? Is it published? Factual or thinly creative works get less protection; highly imaginative, unpublished works get more.

Amount & substantiality used: This assesses both the quantity and qualitative value of what was taken relative to the whole work. Using the heart of a song may weigh against fair use even if the excerpt is short, while copying an entire work can still be fair if the purpose requires it (e.g., full-text search or machine-learning training).

Effect on the potential market: Does the secondary use replace the original or usurp a likely licensing market?

These criteria were evaluated in a recent lawsuit against Anthropic, which used books to train its LLM. The judge found the use to be transformative and necessary to train the AI on the entire book and acceptable under Fair Use. The lawsuit did find that Athropic violated copyright law by providing Claude with access to a website containing pirated books. This ruling means that you can train on copyrighted works, but you can’t steal them, and multiple court cases have judged similarly based on piracy laws.23 Further, evidence of ‘plagiarism’ appears to have been heavily manipulated to force the reproduction and not a standard behavior of the AI, as evidenced by The New York Times lawsuit against OpenAI, which found that you had to ‘hack’ the AI to get it to produce anything similar to an exact copy.

Ted’s copycat book, if it used AI, falls under fair use as a function of training the AI.4 The resulting mimicry isn’t plagiarism nor a violation of Fair Use since it’s another order removed from the training. In the creative world, this has already been a mess to untangle, but there’s still one more, even murkier, element: we call it inspiration.

Inspiration

Ted rightly points out that he spent decades studying jazz to write his book, and the scammer merely had to put together a few prompts, probably based on an outline structure common to the genre, and paste them together. However, consider that to write his popular book The History of Jazz, Ted spent years studying other people’s work, evident in his copious footnotes and acknowledgments.

As we explored in AI Is[n't] Killing Artists, Ted created his book by studying the greats and through mimicry. In the non-fiction world, we cite our sources, and these sources are the foundation on which we derive new insights. This is the model that I use here on Polymathic Being because it adds credibility by, as Newton said, “standing on the shoulders of giants,” and avoids accusations of plagiarism.

However, when you delve into fiction, you no longer use citations; instead, you refer to them as inspirations. I can’t plagiarize, but I can emulate.5 In fact, one of the most popular questions I’m asked in author interviews is: “What books inspired The Singularity Chronicles?” I can easily say three books and authors had an impact:

Ender’s Game (and Ender’s Saga writ large) by Orson Scott Card

I tried to capture the intrigue and adventure to keep the tempo moving.

Stranger in a Strange Land by Robert Heinlein

provided a model for the spiritual/political philosophy

The Wandering Inn, a web serial by Pirateaba

I emulated the prose to avoid dense sentence structures typical of Sci-Fi

This is where a lot of artists have a cognitive glitch because, as we explored in Can AI Be Creative, there’s something many fail to understand when it comes to human creativity: we aren’t as uniquely creative as we think we are and to create art, we have to study, emulate, and learn from other artists. Artists understand inspiration, and yet haven’t considered how much they rely on the books and art they’ve learned from.

As a simple example, a plot twist can only exist if an author deviates from what’s expected, and what’s expected is the profile of that genre, IE, the common plotline, which is also known as a trope. Artists also follow established norms, and the good artists have learned how to build on their inspirations and subtly break those norms in just the right ways. That’s what we consider creative.

AI being trained on books is not dissimilar from how humans train to write by studying the creative works of others. Take a look at any creative writing or art course, and you’ll quickly see the similarity. It’s also a problem that extends beyond writing, as recently described by Mark Palmer in “My Opening Farewell to AI Loathers,” where he shows that the misunderstanding of human creation and how AI works also permeates Data Science.

This isn’t to say that unethical use of AI, or the use of AI to replace human writing, or just using AI to produce content, is OK. I’m not trying to exonerate AI writ large, but to show how misapplied accusations against AI cause us to misunderstand the very human creative process we feel is threatened.

Summary

To wrap up all these threads, I’ll distill it down to these points:

AI isn’t plagiarizing; however, it is trained on artists’ content just like every other artist who is alive.

The training falls under Fair Use, which allows for the derivative and transformative use of creative work with varying protections based on originality.

Humans create through social learning and mimicry, and we call that inspiration.

The use of pirated material leaves AI companies open to liability, not because they used the material, but because they didn’t legally acquire it.

Humans are using AI not to create, but to profit.

Swinging back to Ted’s article, AI isn’t stealing his readers. That’s being done by a human using the latest technology tools, just like he describes scammers using technology to steal his identity for the past decades. And that’s where it comes back to a human-centric perspective, because it’s humans who are the biggest threat with AI.

It really doesn’t help to make accusations of plagiarism or malicious intent against a tool, and it doesn’t help to misunderstand how AI works or how humans are creative. It sucks that unscrupulous people are using AI to scam people, but AI is not the root cause, just a useful scapegoat. As I’ve said before, I hate writing about AI, but it’s a useful literary foil to help us understand the world we live in and, even better, what makes us human!

Did you enjoy this post? If so, please hit the ❤️ button above or below. This will help more people discover Substacks like this one, which is great. Also, please share here or in your network to help us grow.

Polymathic Being is a reader-supported publication. Becoming a paid member keeps these essays open for everyone. Hurry and grab 20% off an annual subscription. That’s $24 a year or $2 a month. It’s just 50¢ an essay and makes a big difference.

Further Reading from Authors I Appreciate

I highly recommend the following Substacks for their great content and complementary explorations of topics that Polymathic Being shares.

Goatfury Writes All-around great daily essays

Never Stop Learning Insightful Life Tips and Tricks

Cyborgs Writing Highly useful insights into using AI for writing

Educating AI Integrating AI into education

Socratic State of Mind Powerful insights into the philosophy of agency

Deep Impact vs. Armageddon; Antz vs. A Bug’s Life; Dante’s Peak vs. Volcano; White House Down vs. Olympus Has Fallen; The Illusionist vs. The Prestige

There’s another in queue now that will be interesting because it’s a copyright suit which is relying on challenges with training. It’s a big case with large consequences but that doesn’t mean it

Here's more in the Anthropic Case: https://deadline.com/2025/09/anthropic-ai-lawsuit-settlement-1-5-billion-1236509423/

Johns Hopkins Press has announced the licensing of their books to train an AI with compensation to the authors for each title.

There’s also a lot of emulation in non-fiction. In fact, there are entire templates to copy whole-cloth for non-fiction writers.

![AI Is[n't] Killing Artists](https://substackcdn.com/image/fetch/$s_!qytx!,w_140,h_140,c_fill,f_auto,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F48e90ab2-1185-4e39-afe9-4316457c7f59_2560x1440.jpeg)

Your clear distinctions are worthy of highlight as the anti AI bandwagon gains momentum.

Just last night I enjoyed a spirited conversation with someone about AI. My argument being that the human brain is essentially a LLM (currently it seems advanced). In essence, all in the universe is calculate-able with enough computing power.

This was really helpful in understanding the arguments. It certainly forced me to reconsider the 'simple answer'.